Josef Ressel Center for User-friendly Secure Mobile Environments

Downloads

Contents

- Pan shot face database

- ShakeUnlock database

- Optimal derotation of 3D acceleration timeseries

- Device to user authentication

- SuperSmile Android ROM

Pan Shot Face Database

The Pan Shot Face Database contains visual and range recordings of human faces from different perspectives around the user's head, recorded with a set of different devices. It's designed to be used for mobile authentication tests in which information of multiple perspectives is combined. The Pan Shot Face Database is publicly accessible for teaching and research.

Please cite the following publication when using the Pan Shot Face Database:

Please cite the following publication when using facial images from the Pan Shot Face Database that have been processed using our range-based segmentation approach (see "Kinect face datasets" below):

Original datasets

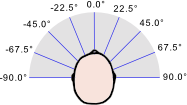

The Pan Shot Face Database contains facial images taken in a "pan shot" around participants' heads. In total it contains recordings of 30 participants from 9 perspectives, with 20 image sets per participant and perspective. Each such image set contains images from different recording devices (see image sample set below).

The Pan Shot Face Database contains facial images taken in a "pan shot" around participants' heads. In total it contains recordings of 30 participants from 9 perspectives, with 20 image sets per participant and perspective. Each such image set contains images from different recording devices (see image sample set below).

Pan shot sample set from -45.0° perspective (from left to right): colored DSLR image, Kinect colored image, Kinect range image (converted TIF32 to PNG8, removed background), LG Optimus 3D Max colored stereo images (left and right camera).

The following original dataset can be downloaded:

- DSLR images, slightly downsized (JPEG)

- DSLR images, cropped, grayscaled (PNG)

- Kinect colored images (PNG)

- Kinect range images (TIFF)

- LG Optimus stereo images (JPEG)

Kinect face datasets

Based on Kinect color and range images from the database we segmented faces using their range information - to decrease the amount of not-face-related information contained in face images (which can later be used e.g. in face recognition). We used range based face template matching (see left sample) and optional, additional range based snakes (see right sample) to segmented faces in color and range from different perspectives.

There are two types of segmented color and range face images available for download: with and without initial background removal before detecting and segmenting faces. With initial background removal, color and range image pixels corresponding to image information too far away from the camera (based on their range information) have been ignored during processing. Without initial background removal, all pixels have been employed during processing.

- Range template segmented color and range faces (JPEG, TIFF)

- Range template segmented color and range faces with snakes (JPEG, TIFF)

- Range template segmented color and range faces with initial background removal (JPEG, TIFF)

- Range template segmented color and faces with snakes and initial background removal (JPEG, TIFF)

DSLR face datasets

For convenience we also provide DSLR face images; face detected and segmented using OpenCV HAAR- and LBP-cascades. Images within a rotation of 30 degrees from the central, frontal face position (0 degree) have been face detected and segmented using frontal face cascades; images with more rotation used the profile cascades instead. Note that these cascades have not been designed for rotations between frontal and profile, leading to e.g. some faces not being centered.

We provide the following DSLR face downloads:

- LBP- and HAAR detected and segmented faces: original size, no preprocessing and no false positives removed (PNG)

- LBP- and HAAR detected and segmented faces: original size, no preprocessing, majority of false positives removed by filtering small face images (PNG)

- LBP-detected and segmented faces: resized to (88/150)x150px, majority of false positives removed by filtering small face images: without histogram equalization (PNG) and with histogram equalization (PNG)

- HAAR-detected and segmented faces: resized to 150x150px, majority of false positives removed by filtering small face images: without histogram equalization (PNG) and with histogram equalization (PNG)

Pan shot face recognition demo (authentication framework face module)

Along with the Pan Shot Face Database and its evaluations a pan shot face recognition protoype has been implemented for Android. The last version of this prototype has been integrated into the face module for the Android authentication framework, where its development is continued. The face module for the authentication framework can be obtained here:

- Repository at https://github.com/mobilesec/authentication-framework-module-face

- Precompiled Android authentication framework face module APK

Pan shot functionality is disabled by default in the repository and the apk: you can enable it by changing it in the setting. Be aware that processing pan shot samples takes much longer than processing frontal only images.

ShakeUnlock Database

The ShakeUnlock Database contains different time series recordings of people pairwise shaking mobile devices. It's designed to be used for security related tasks, like unlocking mobile devices based on shaking. The ShakeUnlock Database is publicly accessible for teaching and research.

Please cite the following publication when using the ShakeUnlock Database database:

Original datasets

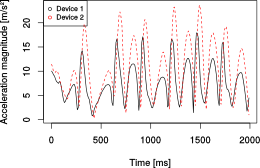

The 2014 data set of the ShakeUnlock Database contains 3 axes acceleration and gyroscope sensor data of 29 people shaking two devices together. The devices were a mobile phone (held in the hand) and a wrist watch (strapped to the wrist). Participants shook the devices for about 10s and device embedded sensor recorded the according timeseries. For each participant we recorded 20 data sets in total, divided into four groups (sitting/standing while shaking and using the dominant/non dominant hand for shaking devices). Therefore the whole data set in total contains 1160 time series recordings in the form of csv files. Two such files are always correlated (means that they were recorded by two devices being shaken together). Each file contains 3 axes time series recordings of a single device along with timestamps (the sample shows 2s of acceleration magnitudes computed out of these 3 individual axes for demonstration purposes). If a file is empty this indicates that no recording was done (this is the case for gyroscope recordings of the watch for 3 participants as the watch did not feature a gyroscope). The exact parameters per recording are stated in the corresponding metadata csv file.

The 2014 data set of the ShakeUnlock Database contains 3 axes acceleration and gyroscope sensor data of 29 people shaking two devices together. The devices were a mobile phone (held in the hand) and a wrist watch (strapped to the wrist). Participants shook the devices for about 10s and device embedded sensor recorded the according timeseries. For each participant we recorded 20 data sets in total, divided into four groups (sitting/standing while shaking and using the dominant/non dominant hand for shaking devices). Therefore the whole data set in total contains 1160 time series recordings in the form of csv files. Two such files are always correlated (means that they were recorded by two devices being shaken together). Each file contains 3 axes time series recordings of a single device along with timestamps (the sample shows 2s of acceleration magnitudes computed out of these 3 individual axes for demonstration purposes). If a file is empty this indicates that no recording was done (this is the case for gyroscope recordings of the watch for 3 participants as the watch did not feature a gyroscope). The exact parameters per recording are stated in the corresponding metadata csv file.

- Accelerometer recordings (in [m/s^2]): metadata file, time series files

- Gyroscope data (in [rad/s]): metadata file, time series files

Preprocessed datasets

Active segments (periods of devices being actively shaken) have been extracted from the ShakeUnlock Database for evaluation purposes. Per participant, device and try two active segments have been extracted to individual files. Active segment files again contain a timestamp along with 3 axes time series recordings. Empty files indicate that no active segment could be extracted. These active segments can be used directly in similarity evaluations without requiring previous active segment detection.

- Accelerometer active segments (in [m/s^2]): metadata file, time series files

Optimal derotation of 3D acceleration timeseries implementation

To compensate for unknown spatial alignment of 3D accelerometers during timeseries recordings in ShakeUnlock, we derotate timeseries before comparing them. The derotation is performend so that two 3-axes-timeseries are optimally rotated towards each other - so that their similarity is maximized (by minimzing their mean squared error).

- Optimal derotation code (currently in Java, R and Octave/Matlab): https://github.com/mobilesec/timeseries-derotation

Please use the following reference to cite usage of our derotation approach:

Device-to-user authentication: users recognizing mobile device vibration patterns

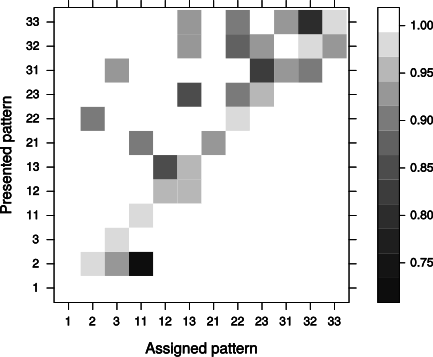

The preliminary device-to-user vibration authentication evaluation database contains records of participants with an assigned vibration pattern trying to verify that other, presented vibration patterns are actually their vibration pattern. The goal was to prove users being able to successfully distinguish simple vibration patterns from their mobile phone, which can consequently be used as device-to-user information feedback channel. The database contains evaluation recordings of 12 participants, doing at least 12 sets of vibration recognition each. For each set participants were assigned a short vibration pattern (average pattern length: 465ms) and had to decide for 16 random vibration patterns, if these were actually their assigned pattern (probability of being presented the assignet pattern: 5/16). The database contains a total of 2304 vibration recognition records - 1614 intended to be negative, and 898 intended to be positive - which reflect how well users were able to distinguish different vibration patterns.

The preliminary device-to-user vibration authentication evaluation database contains records of participants with an assigned vibration pattern trying to verify that other, presented vibration patterns are actually their vibration pattern. The goal was to prove users being able to successfully distinguish simple vibration patterns from their mobile phone, which can consequently be used as device-to-user information feedback channel. The database contains evaluation recordings of 12 participants, doing at least 12 sets of vibration recognition each. For each set participants were assigned a short vibration pattern (average pattern length: 465ms) and had to decide for 16 random vibration patterns, if these were actually their assigned pattern (probability of being presented the assignet pattern: 5/16). The database contains a total of 2304 vibration recognition records - 1614 intended to be negative, and 898 intended to be positive - which reflect how well users were able to distinguish different vibration patterns.

Please use the following reference to cite usage of our device-to-user authentication and vibration pattern recognition approach:

Download vibration pattern recognition evaluation records

Evaluation records of 12 participants with at least 12 vibration pattern recognition sets (csv)

The csv file naming convention is as follows: VibrationRecognition_ParticipantID_SetNr_AssignedVibrationPattern. E.g. VibrationRecognition_01_01_33 represents set 01 of participant 01, with the actual vibration pattern assigned to that user for that set being 33.

Download vibration pattern recognition evaluation Android application

The Android application used in the vibration pattern recognition evaluation is open source and available at https://github.com/mobilesec/device-to-user-authentication-vibration-bandwidth.

SuperSmile ROM

This ROM is based on Android 4.2.2 and includes components of our big picture for a user-friendly secure mobile environment. It is based on SuperNexus, a AOSP based ROM for Samsung devices. The current release is still in alpha but we are working on a stable version which will include multi-channel user authentication, secure element communication and virtualization on Android handsets.

DISCLAIMER: WE ARE NOT RESPONSIBLE IF YOU BRICK YOUR PHONE IN ANY WAY. BASIC COMPUTER SKILLS REQUIRED.

Currently supported devices

- Galaxy S2

- Galaxy S3

Stable versions

You can also download current nightly builds >>